Smart Data Automation with biGENIUS-X

Easily design, build, and maintain any modern analytical data solution with advanced data automation, no matter the data management approach you choose.

Accelerate your data development lifecycle

Build modern data analytics solutions from scratch, or modernize your existing legacy analytical data solutions to a cutting edge technologies in record time by eliminating manual efforts.

Better equip your data team

Advanced automation reduces manual efforts and human error, as well as saves valuable time to build and maintain your data analytics solutions.

Adaptable to any data approach

Future-proof your data with biGENIUS-X, no matter what data management approach you choose right now, or in the future with new technologies.

Fast iterations

Implement new ideas and integrate data into your data analytics solutions in the shortest time possible, and with minimal resources.

Manage scale and complexity

Out-of-the-box support of platforms, methods, and technologies. Benefit from new technologies due to the metadata-driven approach.

Supporting trusted technologies

Powerful features that optimize your workflow

Streamline the process of analytical data solutions management, improve productivity and make your data work for you.

Meta-data driven approach

biGENIUS-X offers a unique advantage by processing your metadata independently from your data pipeline. This separation ensures that your analytical data solution remains uninterrupted and unaffected by metadata processing. As a result, you gain increased control over your live environment, effectively reducing the potential for errors and enhancing overall system stability.

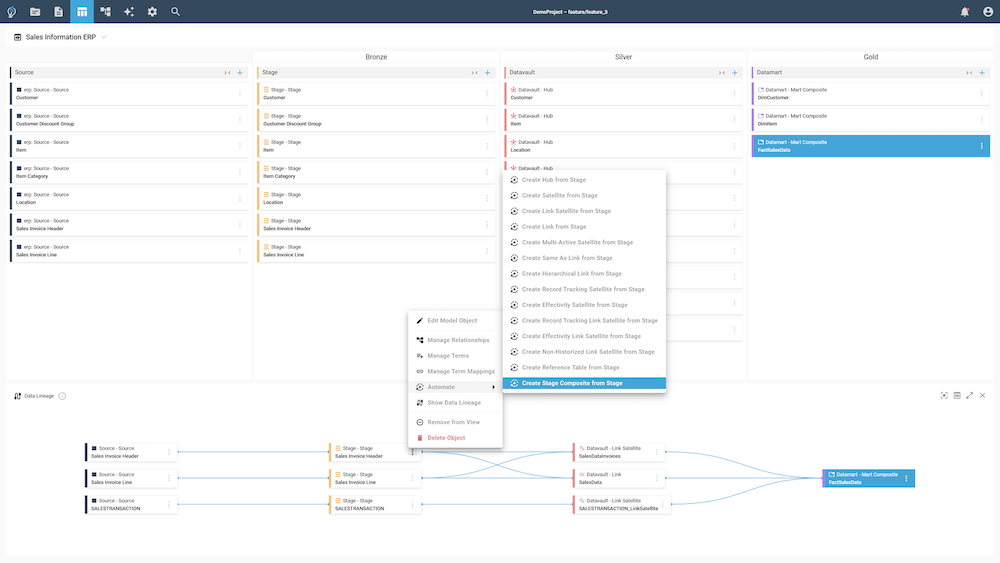

Data modeling wizards

With the assistance of biGENIUS-X wizards, your team gains the ability to efficiently design and develop intricate data models. These user-friendly tools simplify the process and eliminate the need for manual coding, enabling your team to focus on the core aspects of data modeling. As a result, you can expect faster and more streamlined creation of complex data models without ever requiring to write a single line of code.

Advanced orchestration

Leveraging the powerful scheduling engine provided by biGENIUS-X, you can effortlessly manage and coordinate various load jobs within your data processing pipeline. This advanced engine simplifies the task of maintaining the proper sequence of data processing, ensuring that each job is executed in the correct order. By doing so, biGENIUS-X empowers you to optimize your data handling processes, resulting in improved efficiency, accuracy, and overall reliability.

Ready to move to the cloud with biGENIUS-X?

What our customers are saying

See what industry leaders say about biGENIUS and their experience of working with us.